One of the most profound and mysterious principles in all of physics is the Born Rule, named after Max Born. In quantum mechanics, particles don’t have classical properties like “position” or “momentum”; rather, there is a wave function that assigns a (complex) number, called the “amplitude,” to each possible measurement outcome. The Born Rule is then very simple: it says that the probability of obtaining any possible measurement outcome is equal to the square of the corresponding amplitude. (The wave function is just the set of all the amplitudes.)

Born Rule: ![]()

The Born Rule is certainly correct, as far as all of our experimental efforts have been able to discern. But why? Born himself kind of stumbled onto his Rule. Here is an excerpt from his 1926 paper:

That’s right. Born’s paper was rejected at first, and when it was later accepted by another journal, he didn’t even get the Born Rule right. At first he said the probability was equal to the amplitude, and only in an added footnote did he correct it to being the amplitude squared. And a good thing, too, since amplitudes can be negative or even imaginary!

The status of the Born Rule depends greatly on one’s preferred formulation of quantum mechanics. When we teach quantum mechanics to undergraduate physics majors, we generally give them a list of postulates that goes something like this:

- Quantum states are represented by wave functions, which are vectors in a mathematical space called Hilbert space.

- Wave functions evolve in time according to the Schrödinger equation.

- The act of measuring a quantum system returns a number, known as the eigenvalue of the quantity being measured.

- The probability of getting any particular eigenvalue is equal to the square of the amplitude for that eigenvalue.

- After the measurement is performed, the wave function “collapses” to a new state in which the wave function is localized precisely on the observed eigenvalue (as opposed to being in a superposition of many different possibilities).

It’s an ungainly mess, we all agree. You see that the Born Rule is simply postulated right there, as #4. Perhaps we can do better.

Of course we can do better, since “textbook quantum mechanics” is an embarrassment. There are other formulations, and you know that my own favorite is Everettian (“Many-Worlds”) quantum mechanics. (I’m sorry I was too busy to contribute to the active comment thread on that post. On the other hand, a vanishingly small percentage of the 200+ comments actually addressed the point of the article, which was that the potential for many worlds is automatically there in the wave function no matter what formulation you favor. Everett simply takes them seriously, while alternatives need to go to extra efforts to erase them. As Ted Bunn argues, Everett is just “quantum mechanics,” while collapse formulations should be called “disappearing-worlds interpretations.”)

Like the textbook formulation, Everettian quantum mechanics also comes with a list of postulates. Here it is:

- Quantum states are represented by wave functions, which are vectors in a mathematical space called Hilbert space.

- Wave functions evolve in time according to the Schrödinger equation.

That’s it! Quite a bit simpler — and the two postulates are exactly the same as the first two of the textbook approach. Everett, in other words, is claiming that all the weird stuff about “measurement” and “wave function collapse” in the conventional way of thinking about quantum mechanics isn’t something we need to add on; it comes out automatically from the formalism.

The trickiest thing to extract from the formalism is the Born Rule. That’s what Charles (“Chip”) Sebens and I tackled in our recent paper:

Self-Locating Uncertainty and the Origin of Probability in Everettian Quantum Mechanics

Charles T. Sebens, Sean M. CarrollA longstanding issue in attempts to understand the Everett (Many-Worlds) approach to quantum mechanics is the origin of the Born rule: why is the probability given by the square of the amplitude? Following Vaidman, we note that observers are in a position of self-locating uncertainty during the period between the branches of the wave function splitting via decoherence and the observer registering the outcome of the measurement. In this period it is tempting to regard each branch as equiprobable, but we give new reasons why that would be inadvisable. Applying lessons from this analysis, we demonstrate (using arguments similar to those in Zurek’s envariance-based derivation) that the Born rule is the uniquely rational way of apportioning credence in Everettian quantum mechanics. In particular, we rely on a single key principle: changes purely to the environment do not affect the probabilities one ought to assign to measurement outcomes in a local subsystem. We arrive at a method for assigning probabilities in cases that involve both classical and quantum self-locating uncertainty. This method provides unique answers to quantum Sleeping Beauty problems, as well as a well-defined procedure for calculating probabilities in quantum cosmological multiverses with multiple similar observers.

Chip is a graduate student in the philosophy department at Michigan, which is great because this work lies squarely at the boundary of physics and philosophy. (I guess it is possible.) The paper itself leans more toward the philosophical side of things; if you are a physicist who just wants the equations, we have a shorter conference proceeding.

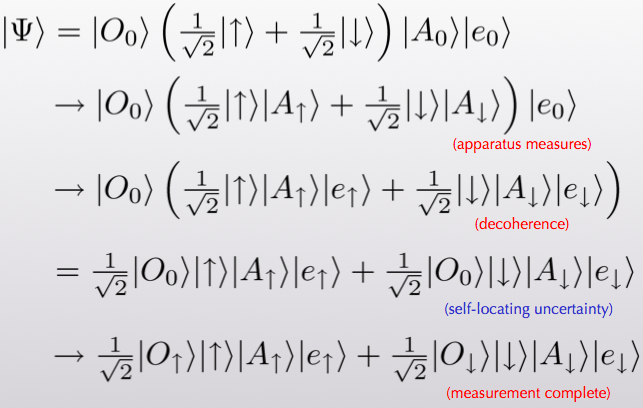

Before explaining what we did, let me first say a bit about why there’s a puzzle at all. Let’s think about the wave function for a spin, a spin-measuring apparatus, and an environment (the rest of the world). It might initially take the form

(α[up] + β[down] ; apparatus says “ready” ; environment0). (1)

This might look a little cryptic if you’re not used to it, but it’s not too hard to grasp the gist. The first slot refers to the spin. It is in a superposition of “up” and “down.” The Greek letters α and β are the amplitudes that specify the wave function for those two possibilities. The second slot refers to the apparatus just sitting there in its ready state, and the third slot likewise refers to the environment. By the Born Rule, when we make a measurement the probability of seeing spin-up is |α|2, while the probability for seeing spin-down is |β|2.

In Everettian quantum mechanics (EQM), wave functions never collapse. The one we’ve written will smoothly evolve into something that looks like this:

α([up] ; apparatus says “up” ; environment1)

+ β([down] ; apparatus says “down” ; environment2). (2)

This is an extremely simplified situation, of course, but it is meant to convey the basic appearance of two separate “worlds.” The wave function has split into branches that don’t ever talk to each other, because the two environment states are different and will stay that way. A state like this simply arises from normal Schrödinger evolution from the state we started with.

So here is the problem. After the splitting from (1) to (2), the wave function coefficients α and β just kind of go along for the ride. If you find yourself in the branch where the spin is up, your coefficient is α, but so what? How do you know what kind of coefficient is sitting outside the branch you are living on? All you know is that there was one branch and now there are two. If anything, shouldn’t we declare them to be equally likely (so-called “branch-counting”)? For that matter, in what sense are there probabilities at all? There was nothing stochastic or random about any of this process, the entire evolution was perfectly deterministic. It’s not right to say “Before the measurement, I didn’t know which branch I was going to end up on.” You know precisely that one copy of your future self will appear on each branch. Why in the world should we be talking about probabilities?

Note that the pressing question is not so much “Why is the probability given by the wave function squared, rather than the absolute value of the wave function, or the wave function to the fourth, or whatever?” as it is “Why is there a particular probability rule at all, since the theory is deterministic?” Indeed, once you accept that there should be some specific probability rule, it’s practically guaranteed to be the Born Rule. There is a result called Gleason’s Theorem, which says roughly that the Born Rule is the only consistent probability rule you can conceivably have that depends on the wave function alone. So the real question is not “Why squared?”, it’s “Whence probability?”

Of course, there are promising answers. Perhaps the most well-known is the approach developed by Deutsch and Wallace based on decision theory. There, the approach to probability is essentially operational: given the setup of Everettian quantum mechanics, how should a rational person behave, in terms of making bets and predicting experimental outcomes, etc.? They show that there is one unique answer, which is given by the Born Rule. In other words, the question “Whence probability?” is sidestepped by arguing that reasonable people in an Everettian universe will act as if there are probabilities that obey the Born Rule. Which may be good enough.

But it might not convince everyone, so there are alternatives. One of my favorites is Wojciech Zurek’s approach based on “envariance.” Rather than using words like “decision theory” and “rationality” that make physicists nervous, Zurek claims that the underlying symmetries of quantum mechanics pick out the Born Rule uniquely. It’s very pretty, and I encourage anyone who knows a little QM to have a look at Zurek’s paper. But it is subject to the criticism that it doesn’t really teach us anything that we didn’t already know from Gleason’s theorem. That is, Zurek gives us more reason to think that the Born Rule is uniquely preferred by quantum mechanics, but it doesn’t really help with the deeper question of why we should think of EQM as a theory of probabilities at all.

Here is where Chip and I try to contribute something. We use the idea of “self-locating uncertainty,” which has been much discussed in the philosophical literature, and has been applied to quantum mechanics by Lev Vaidman. Self-locating uncertainty occurs when you know that there multiple observers in the universe who find themselves in exactly the same conditions that you are in right now — but you don’t know which one of these observers you are. That can happen in “big universe” cosmology, where it leads to the measure problem. But it automatically happens in EQM, whether you like it or not.

Think of observing the spin of a particle, as in our example above. The steps are:

- Everything is in its starting state, before the measurement.

- The apparatus interacts with the system to be observed and becomes entangled. (“Pre-measurement.”)

- The apparatus becomes entangled with the environment, branching the wave function. (“Decoherence.”)

- The observer reads off the result of the measurement from the apparatus.

The point is that in between steps 3. and 4., the wave function of the universe has branched into two, but the observer doesn’t yet know which branch they are on. There are two copies of the observer that are in identical states, even though they’re part of different “worlds.” That’s the moment of self-locating uncertainty. Here it is in equations, although I don’t think it’s much help.

You might say “What if I am the apparatus myself?” That is, what if I observe the outcome directly, without any intermediating macroscopic equipment? Nice try, but no dice. That’s because decoherence happens incredibly quickly. Even if you take the extreme case where you look at the spin directly with your eyeball, the time it takes the state of your eye to decohere is about 10-21 seconds, whereas the timescales associated with the signal reaching your brain are measured in tens of milliseconds. Self-locating uncertainty is inevitable in Everettian quantum mechanics. In that sense, probability is inevitable, even though the theory is deterministic — in the phase of uncertainty, we need to assign probabilities to finding ourselves on different branches.

So what do we do about it? As I mentioned, there’s been a lot of work on how to deal with self-locating uncertainty, i.e. how to apportion credences (degrees of belief) to different possible locations for yourself in a big universe. One influential paper is by Adam Elga, and comes with the charming title of “Defeating Dr. Evil With Self-Locating Belief.” (Philosophers have more fun with their titles than physicists do.) Elga argues for a principle of Indifference: if there are truly multiple copies of you in the world, you should assume equal likelihood for being any one of them. Crucially, Elga doesn’t simply assert Indifference; he actually derives it, under a simple set of assumptions that would seem to be the kind of minimal principles of reasoning any rational person should be ready to use.

But there is a problem! Naïvely, applying Indifference to quantum mechanics just leads to branch-counting — if you assign equal probability to every possible appearance of equivalent observers, and there are two branches, each branch should get equal probability. But that’s a disaster; it says we should simply ignore the amplitudes entirely, rather than using the Born Rule. This bit of tension has led to some worry among philosophers who worry about such things.

Resolving this tension is perhaps the most useful thing Chip and I do in our paper. Rather than naïvely applying Indifference to quantum mechanics, we go back to the “simple assumptions” and try to derive it from scratch. We were able to pinpoint one hidden assumption that seems quite innocent, but actually does all the heavy lifting when it comes to quantum mechanics. We call it the “Epistemic Separability Principle,” or ESP for short. Here is the informal version (see paper for pedantic careful formulations):

ESP: The credence one should assign to being any one of several observers having identical experiences is independent of features of the environment that aren’t affecting the observers.

That is, the probabilities you assign to things happening in your lab, whatever they may be, should be exactly the same if we tweak the universe just a bit by moving around some rocks on a planet orbiting a star in the Andromeda galaxy. ESP simply asserts that our knowledge is separable: how we talk about what happens here is independent of what is happening far away. (Our system here can still be entangled with some system far away; under unitary evolution, changing that far-away system doesn’t change the entanglement.)

The ESP is quite a mild assumption, and to me it seems like a necessary part of being able to think of the universe as consisting of separate pieces. If you can’t assign credences locally without knowing about the state of the whole universe, there’s no real sense in which the rest of the world is really separate from you. It is certainly implicitly used by Elga (he assumes that credences are unchanged by some hidden person tossing a coin).

With this assumption in hand, we are able to demonstrate that Indifference does not apply to branching quantum worlds in a straightforward way. Indeed, we show that you should assign equal credences to two different branches if and only if the amplitudes for each branch are precisely equal! That’s because the proof of Indifference relies on shifting around different parts of the state of the universe and demanding that the answers to local questions not be altered; it turns out that this only works in quantum mechanics if the amplitudes are equal, which is certainly consistent with the Born Rule.

See the papers for the actual argument — it’s straightforward but a little tedious. The basic idea is that you set up a situation in which more than one quantum object is measured at the same time, and you ask what happens when you consider different objects to be “the system you will look at” versus “part of the environment.” If you want there to be a consistent way of assigning credences in all cases, you are led inevitably to equal probabilities when (and only when) the amplitudes are equal.

What if the amplitudes for the two branches are not equal? Here we can borrow some math from Zurek. (Indeed, our argument can be thought of as a love child of Vaidman and Zurek, with Elga as midwife.) In his envariance paper, Zurek shows how to start with a case of unequal amplitudes and reduce it to the case of many more branches with equal amplitudes. The number of these pseudo-branches you need is proportional to — wait for it — the square of the amplitude. Thus, you get out the full Born Rule, simply by demanding that we assign credences in situations of self-locating uncertainty in a way that is consistent with ESP.

We like this derivation in part because it treats probabilities as epistemic (statements about our knowledge of the world), not merely operational. Quantum probabilities are really credences — statements about the best degree of belief we can assign in conditions of uncertainty — rather than statements about truly stochastic dynamics or frequencies in the limit of an infinite number of outcomes. But these degrees of belief aren’t completely subjective in the conventional sense, either; there is a uniquely rational choice for how to assign them.

Working on this project has increased my own personal credence in the correctness of the Everett approach to quantum mechanics from “pretty high” to “extremely high indeed.” There are still puzzles to be worked out, no doubt, especially around the issues of exactly how and when branching happens, and how branching structures are best defined. (I’m off to a workshop next month to think about precisely these questions.) But these seem like relatively tractable technical challenges to me, rather than looming deal-breakers. EQM is an incredibly simple theory that (I can now argue in good faith) makes sense and fits the data. Now it’s just a matter of convincing the rest of the world!

I guess this is trivial but another difficulty with equiprobable universes is the following: if the squared amplitudes are incommensurate* then the right probability law cannot be obtained with a finite number of universes.

* (say alpha squared = 1/sqrt(2) and beta squared = 1 – alpha squared)

Sorry, missed your reply for a while, and I’m not sure if you’re still wadding through the comments.

“(1) off-diagonal terms are small, so branches evolve almost-independently, therefore (2) we can assign probabilities to branches, and once we do that we can (3) ask about the probability of the off-diagonal terms growing large and witnessing interference between branches. ”

Step (1) cannot mention “almost-independence.” It can only mention small numbers, which, if they were interference terms (a probabilistic notion–look at what interference is in the two-slit experiment) would be measures of the degree of independence. Then, in step (2), in all the approaches I’m familiar with, we talkabout emergent observers living in the branches. I’m not sure I understand the legitimacy of talking about emergent observers. All I see, at this point, is a big mushy wave function with some small off-diagonal elements in some of its representations. And now (I would obviously say) step three makes no sense to me.

Pingback: alQpr » Blog Archive » Measurement in QM

The problem with presenting MWI in a textbook is that the 2 mathematical postulates (1) and (2) lacks any connection to experiments and observations. And nobody have been able to come up with simple MWI versions of the rest of the postulates, as this article and consequent discussions make clear.

And MWI is not helped by the all the discussions about the born rule. I don’t know why people are so obsessed with deriving it from (1) and (2). Just postulate it and move on. Until the preferred basis problem is resolved or better understood, any proof will be flawed.

The real contribution of Everett was the removal/replacement of the collapse postulate. I would like to see the MWI postulates/rules covering the same areas as the standard postulates.

Hi Sean,

It would be great if you did your next book was on EQM.

Thanks,

Jake

The MWI is a tempting siren. Telling Sean that he is wrong will not work because he is stuck in the limbo of thinking it could be right (which would be cool) and knowing there is no way to prove it wrong. I could do some probability derivation tricks, but this is probably not the right crowd. Maybe we should come back to the postulates of quantum when everyone has cooled off a bit. In my experience the best thing to do with someone stuck in a quantum spiral is to give them a different paradox that will distract them. A lot of the confusion I’ve seen in quantum is simply due to the semantics of the theory. Don’t worry Sean I’ll make up an easy relativity problem to help you get out of your quantum doldrums. Be right back…..

Funny, as a layman, I always thought that was the practical meaning of the probablity. The world “splits” and most of the split ends up where most of the probablity lies. Yes there are worlds where the particle ends up in some remote corner of the universe rather than hitting the screen in one of the bright bars of the twin slit, but most of them are worlds where the particle lands on the bright bars (in proportion to the square of the amplitude).

“Exactly how and when branching happens” is the rub. It’s the giant elephant for MWI because you are at bottom replacing the quantum realm with a classical one(explaining quantum mechanics through a classical artifice).

Pingback: Quantum Sleeping Beauty and the Multiverse | Sean Carroll

@Jerry: Since Sean did not give you an elaborate answer, I will try to answer your interesting question as a physicist, since many of my friends and relatives are intelligent physicians and they ask such questions! Although I am not convinced by MWI, I believe, there is hardly any doubt that Quantum Mechanics is mostly right. Every object has a De broglie wavelength = h/(mv) where h is Planck’s constant, m is mass and v is the speed. So for small objects like electrons the wavelength will be small and sometimes measurable like in electron microscope. For you and me it is extremely small like 10^ (-35) cm or so. It is extremely small to find any measurable effect. Thus the whole universe and all of us could have a wave function but it will be hard to verify. Incidentally, closeness is a relative concept. The microscopic universe is essentially vacuum. An analogy to atom is a football field with electrons in the stadium and nucleus is roughly a football at the center of the stadium, with vacuum in between. Of course this is a crude description for visualization. For calculational purpose they are mostly wavy stuff.

You do realize that people at the LHC are claiming that the Higgs Boson doesn’t have the correct mass for the multiverse theories right?

Is there a good reason why the precise verbal description of Born’s rule, that the probability equals the abs squared amplitude (as in the mathematical expression) is abbreviated to just “the squared amplitude?” Is it a standard abbreviation? Until I realized that that’s how this post calls it in every instance, I was sure it’s a mistake.

It’s just convenient and short.

I have an interpretational question that seems appropriate in this context. As I understand it, Feynman’s sum-over-histories approach gives an amplitude to each history, and you can apply the Born rule to get a probability for the given history. Is it completely crazy to apply this approach to the whole universe, and to postulate that there really only is one world, but it’s drawn from the space of all possible universe-histories with a probability distribution given by the Born rule applied to the amplitudes of the different histories?

My intuition about this is that, until I start talking about the One True Universe, I’m just describing one way of formulating Everettian QM. And that the strongest objections to postulating the One True Universe are philosophical. It violates symmetry, it’s not useful for talking about physics, and it violates Occam’s razor. But it might also be the case that there’s a fundamental reason the One True Universe model can’t be right. It might reflect a fundamental misunderstanding I have.

So am I just wrong or merely multiplying entities beyond necessity?

Probability? I think it is time to re-interpret Copenhagen and discuss the absolute.

Einstein couldn’t find it because the speed of light stood in his way. And science can’t find it because they went the wrong way.

Certainty any One?

=

Thank-you Dr Vasavada-

I do understand that in normal circumstances the tiny universe is nearly empty. Some exceptions I guess would be neutron stars and the plasma of particles in the first nanoseconds of the universe.

It appears to some of us amateurs that photons, electrons, protons, etc travel in waves and that their exact position and momentum cannot be known, as per the uncertainty principle.

It was my understanding that the “wave function” describes all the possible positions of the particle and the likelihood of the particle being in any of the possible positions was given by the square of the amplitude.

I was also of the belief that when a particle interacts with another particle, such as a photon kicking an electron into a higher energy level, for that instant the wave function collapses and the location of the event can be observed. A measurement is made.

My thought is that I cannot get my head around a single wave function describing

a complex living creature, but I can certainly imagine it as the integration of all the wave functions of the individual particles- ie. the sound of the symphony heard from all the instruments.

Please excuse my naivety in my posts- but this blog is followed by many of us trying to achieve better understanding.

I work in a busy Emergency Dept so I live the “many worlds” theory on a daily basis.

Thank-you all again,

Sean,

I have a different interpretation. Whenever a measurement takes place, the universe splits into 100 universes. The number of universes containing outcome A is just the probability times 100, etc. Now, if the probabilities are decimals, then the universe splits into however many universes as needed to have whole numbers of universes with each outcome. If the probability is, for example, 1/3 = 0.333333333…, then the universe splits into an infinite number of copies.

How do you like my interpretation?

I think that the fact that psi^star psi is the time component of a conserved

four-current density should play a major role in any explanation

of why psi^star psi represents a probability density.

I’m not a physicist. I am an arm chair philosopher however. I believe that a satisfying answer to the reason by the probability is the square of the amplitude is because there are TWO state vectors. One evolving forward in time and the other in reverse. I haven’t read all the responses, but it seems to me that many physicists are not aware of Yakir Aharonov’s work (weak measurements, etc.). Here’s a link to the arXiv server of a paper titled “Measurement and Collapse within the Two-State-Vector Formalism”:

http://xxx.lanl.gov/pdf/1406.6382

For me this provide a resonable answer to the “collapse” issue.

@Jerry Salomone : You did raise interesting questions. So I jumped in! Let me add something to the concept of wave function for a composite system. Upto certain energy a composite particle may behave like an elementary particle. For example, for low energies (nuclear physics) there is no need to consider quark substructure of protons and neutrons. Writing a wave function of a proton at low energies in terms of products of wave functions of quarks, while not wrong, is unnecessarily complicated and one gets tangled into unnecessary mess. Until high energies it is perfectly OK to write a single wave function for a nucleon. Only at higher energies one has to consider quark sub structure. Similarly in condensed matter physics, whole atom may be regarded as having a single wave function.

Another thing: people are finding quantum effects for larger and larger systems e.g. in lasers, superconductivity, Bose-Einstein condensation and entanglements have been found at distances of several miles. Many physicists think there is nothing like classical mechanics! It is all quantum! So my guess is that eventually people may find quantum effects in biological systems and perhaps in brain and consciousness!

Thanks for working in emergency dept. I may need people like you some time!

You listed 2 postulated from Everett’s QM.

You should also add:

3. the wave function should describe all the particles that form an observer and its memory

4. you can deduce “relative states” of the model by deducing what the modeled observer has measured by examing its memory

Including the observer and its memory is how Everett avoided the collapse.

As you note in the paper, your approach directly shows how to derive the Born rule in finite dimensional Hilbert spaces when the coefficients of the wavefunction in your preferred basis are square-root-of-rational multiples of one another. By appealing to some continuity principle, you extend to the case where the coefficients are arbitrary real multiples of each other.

But you don’t say anything about when the coefficients are non-real multiples of each other (which is generically the case). Can you account for non-real coefficients?

Given a specific state, you can always choose basis vectors such that the amplitudes are real.

I’m curious.

What considerations you’ve given to actually doing what Everett writes in his paper, of using the mathematics of particle physics to construct an observer, an “automatically functioning machine with sensors and a memory” as he writes.

In 1956, such a wave equation describing that large number of particles was surely a hypothetical notion.

But with the computing power of 2014, it should be within reach.

Sean,

I very much appreciate your tie-in of self-location to the EQM Born rule. I have done something similar in my recent dissertation, although taking, I think, a quite different approach. Our starting points (self-location) and ending points (Born rule), however, are much the same, and I think we are pumping the same intuitions, one way or another.

Here is my reaction so far. First of all, I think anything that claims to be a response to the EQM Born rule objection must add something new beyond Gleason and Gleason-noncontextuality (GNC). Since GNC + Gleason’s theorem = Born rule (an analytic fact) this is an absolute must, although it seems not to be widely recognized for some reason.

The problem for me lies in whether ESP is any more intuitive and natural an assumption than GNC, from an EQM perspective. You defend ESP in physicalist terms, by talking about physical intuitions, which granted you are trying to minimize. However, the whole point of EQM, is that the Born rule follows simply from the idea of a purely formal system (without any prior physical interpretation) in which there are these emergent phenomena called “observers”. Now we ask, assuming that the system describes multiple outcomes for one of these observers when they perform a measurement, how should that observer assign probabilities to these outcomes? Prior ideas of what is “natural” physically are not allowed. Only the formal wavefunction is allowed, plus presumably some principles or other to enable us to discern observers within the wavefunction. But these would be like principles of finding eddies in a river; they would describe emergent phenomena that are not fundamental constituents of the system. The wavefunction itself need not be “physical” but could be a simulation on a computer, or just left as an abstract mathematical object. It is purely formal. There is no inherent “space” and “time” and so on, because these are not formal entities, but physical ones.

So my question: why not just stick with GNC, since it is very analytic, and perfectly clear what it means? It requires no hand-wavey physical principles, no environment or environment-induced decoherence either — and would even be perfectly applicable to a solipsistic wavefunction, should we ever encounter one. Also, its truth seems much easier to justify, given its clarity, so long as one is an objectivist about probabilities (not excluding here the possibility that subjectivists may have their own reasons for accepting it). Thus, it can be justified (or refuted) prior to any discussion of EQM, at the level of choosing a foundation for probability theory. This cuts off the Born rule objectors before we even get to QM and challenges them to prove their faith in world-counting first, then argue against many worlds.

If this sounds critical, believe me when I say I am on your side! I just wonder if it might be more fruitful to challenge the objectors where they are truly weakest: their unquestioned faith in world counting. I have never understood where one gets world counting or observer counting as a basic a priori principle, in the first place, but many people out there seem to think it is “just obvious”. But without it, there is no really viable Born rule objection to EQM, because there is no reasonable alternative measure on the table.

My own take on this can be found in my dissertation. Ch. 4 is my interpretation of probability (neither Bayesian nor frequentist, but algorithmic) and Ch. 5 is my discussion of self-location and self-selection, including Sleeping Beauty. Ch. 8 uses this foundation to make a start on an algorithmic reconstruction of QM:

http://hdl.handle.net/10315/27640